XPathOnURL is an amazing function within the amazing SEO Tools plugin for Excel that can scrape specific pieces of content that you need when entering in a URL and the XPath of the content that you want to retrieve. This post isn’t going to be a quite to XPathOnURL, nor is it going to be a guide to XPath – instead what it is looking at is a way to retrieve multiple pieces of data when using XPathOnURL. If you are interested in playing around with XPath then download the XPath Helper plugin for Google Chrome that will help you get the XPath of the items you want to scrape.

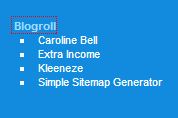

By default the XPathOnURL function within the SEO Tools plugin only brings back the first occurrence of the item within the DOM (Document Object Model – i.e the HTML of the page..). As an example lets take my blogroll;

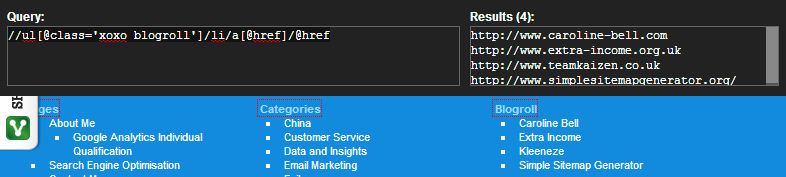

When using the XPath Helper plugin for Google Chrome, and tweaking the XPath a little to get what I need, you can see that the XPath present is showing that there are four links present within the blogroll;

All sounds good right. Well not quite, since when you copy this XPath into the XPathOnURL formula as part of SEO Tools then you will only get back the first URL instead of all four of them. Note, you cannot simply copy the exact XPath as shown in the screenshot above since you need to do something slightly different to scrape the HREF attribute using XPathOnURL – luckily I wrote a blog post just about that a while ago!

For the purpose of this blog post, I will use the XPath version for use with XPathOnURL without further explanation about that – read the previous blog post if this doesn’t make sense.

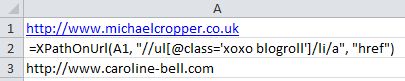

Anyways, back to the point. So when trying to scrape all four of the blogroll links using XPathOnURL you would think it would be as simple as entering the correct formula right? Unfortunately not. Blow is what happens when you try and do this;

While it is useful to get something back, it isn’t quite what I wanted. Instead I wanted all of the links to come back with this query which is more useful than just the first one. Depending on what you are scraping this can be a bit of a pain if there are quite a few pieces of content you want to scrape.

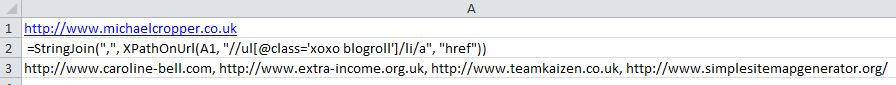

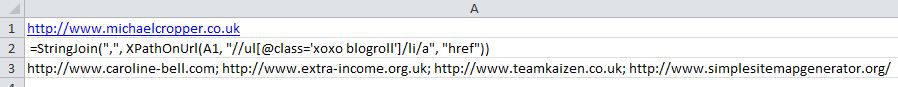

Luckily there is another function within SEO Tools for Excel which is the StringJoin(“,”, “……”) function (information) which helpfully joins together data and separates them by whatever you want – in this case, I have chosen a comma to separate the results by.

How this function can be used in conjunction with XPathOnURL is that you simple wrap it around the previous formula shown above and tell Excel to separate the results using a comma as seen in the example below;

This is a little more useful! So now we have all of the scraped data that we need. From here you can easily count the number of occurrences of a comma in the cell which will tell you how many pieces of data you have scraped. Or alternatively you could use the handy text to columns tool that is built into Excel by default to split out the data into separate columns (if you would prefer it in Rows instead then use the Transposetool)

On a small scale like this it is easy enough to do this manually. But recently I had need to do this for around 500 URLs each with an unknown amount of ‘bits’ on that I needed to count. Life is too short to be doing that kind of stuff manually so found a nice quick way of doing this using a few formulas and a bit of Excel magic. It turned out that the results were over 6500 ‘bits’ which would have taken me an awful long time to count manually!

So hopefully this formula can help save you some time when trying to return multiple nodes using XPathOnURL.

As a final note, as I was writing this post on another computer (and creating the formulas and screenshots for this post etc.), I noticed that the latest version of SEO Tools for Excel actually does this out of the box automatically. So if you don’t want to be doing all of this work manually then maybe it is just easier to upgrade to the latest version of the plugin  Below is what it looks like when using the latest version with all of the pieces of data you have scraped separated by a semi-colon ;

Below is what it looks like when using the latest version with all of the pieces of data you have scraped separated by a semi-colon ;

Thought I would carry on writing the post anyway as it would have saved me a bit of time earlier if I came across this post as I didn’t realise I wasn’t running the latest version!

Michael Cropper

Latest posts by Michael Cropper (see all)

- WGET for Windows - April 10, 2025

- How to Setup Your Local Development Environment for Java Using Apache NetBeans and Apache Tomcat - December 1, 2023

- MySQL Recursive Queries – MySQL While Loops – Fill Zero Sum Dates Between Dates - October 6, 2023

Wish more people posted help with SEOTools online. Seems scarce. Thank so much for the Guide. Very well written and easy to understand.

Glad you found it useful 🙂